Unlock the Secrets of Precision, Recall, and F1 Score: A Simple Guide to Evaluation Metrics

Unlock the Secrets of Precision, Recall, and F1 Score: A Simple Guide to Evaluation Metrics

In the world of machine learning and data science, evaluation metrics are the unsung heroes that help us gauge the performance of our models. Among these metrics, precision, recall, and F1 score are three of the most widely used and misunderstood measures. In this article, we'll delve into the world of precision, recall, and F1 score, exploring what they are, how they're calculated, and why they're essential for evaluating the accuracy of our models.

Precision, recall, and F1 score are not just buzzwords; they're crucial tools that help us understand how well our models are performing. "The choice of evaluation metric depends on the specific problem you're trying to solve," says Dr. Rachel Kim, a leading expert in machine learning. "Precision, recall, and F1 score are essential for evaluating the accuracy of classification models, which are used in a wide range of applications, from spam filtering to medical diagnosis."

In this article, we'll explore the ins and outs of precision, recall, and F1 score, providing you with a comprehensive guide to these essential evaluation metrics.

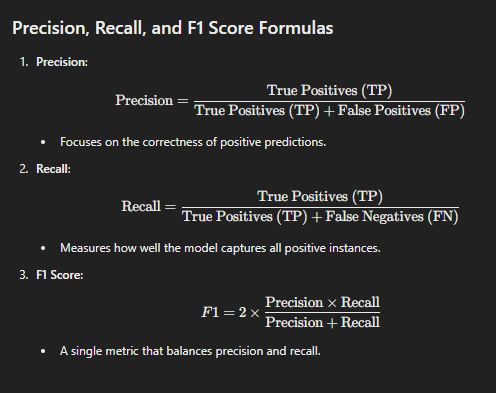

What is Precision?

Precision is a measure of a model's accuracy, specifically its ability to correctly identify true positives (i.e., instances that are actually positive). It's calculated as the number of true positives divided by the sum of true positives and false positives (i.e., instances that are incorrectly classified as positive). In other words, precision measures how often a model correctly identifies a positive instance.

To illustrate this, let's consider a simple example. Suppose we're building a model to predict whether a customer will churn or not. Our model predicts that 100 customers will churn, but only 80 of them actually do. In this case, our model has a precision of 80% (80 true positives / (80 true positives + 20 false positives)).

Why is Precision Important?

Precision is essential because it helps us understand how well our model is avoiding false positives. In many applications, false positives can be costly or even catastrophic. For instance, in medical diagnosis, a false positive diagnosis can lead to unnecessary treatment and emotional distress for patients. In spam filtering, false positives can clog email inboxes with irrelevant messages.

What is Recall?

Recall, on the other hand, is a measure of a model's ability to correctly identify all positive instances. It's calculated as the number of true positives divided by the sum of true positives and false negatives (i.e., instances that are incorrectly classified as negative). In other words, recall measures how often a model correctly identifies a positive instance when it's actually positive.

Using the same example as before, if our model predicts that 100 customers will churn, but only 80 of them actually do, our model has a recall of 80% (80 true positives / (80 true positives + 20 false negatives)).

Why is Recall Important?

Recall is essential because it helps us understand how well our model is avoiding false negatives. In many applications, false negatives can be just as costly as false positives. For instance, in medical diagnosis, a false negative diagnosis can lead to delayed treatment and poor patient outcomes. In credit risk assessment, false negatives can result in missed opportunities for lending.

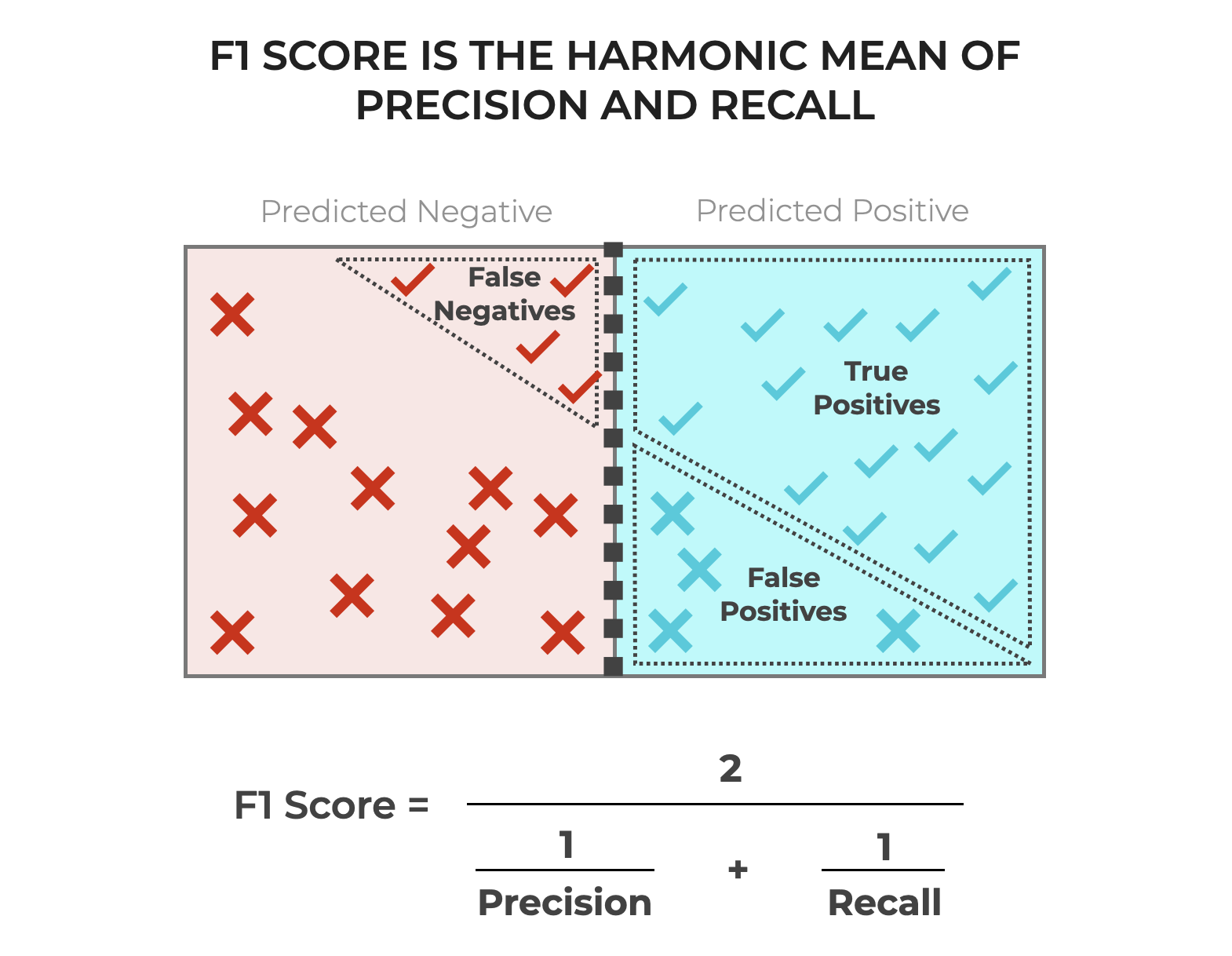

What is F1 Score?

The F1 score is a weighted average of precision and recall, providing a balanced measure of a model's accuracy. It's calculated as 2 times the product of precision and recall, divided by the sum of precision and recall. In other words, the F1 score is a harmonic mean of precision and recall.

Using the same example as before, if our model has a precision of 80% and a recall of 80%, its F1 score would be 0.8 (2 x 0.8 x 0.8 / (0.8 + 0.8)).

Why is F1 Score Important?

F1 score is essential because it provides a balanced measure of precision and recall. By considering both precision and recall, the F1 score helps us understand how well our model is performing in terms of both avoiding false positives and false negatives. In many applications, the F1 score is the preferred metric because it provides a more comprehensive picture of a model's accuracy.

When to Use Precision, Recall, and F1 Score

Precision, recall, and F1 score are not interchangeable metrics, and each has its own strengths and weaknesses. Here's a brief guide on when to use each:

* **Precision**: Use precision when you need to minimize false positives, such as in medical diagnosis or spam filtering.

* **Recall**: Use recall when you need to minimize false negatives, such as in credit risk assessment or medical diagnosis.

* **F1 Score**: Use F1 score when you need a balanced measure of precision and recall, such as in classification problems where both false positives and false negatives are costly.

Common Misconceptions About Precision, Recall, and F1 Score

There are several common misconceptions about precision, recall, and F1 score that can lead to incorrect interpretations. Here are a few:

* **Precision and recall are mutually exclusive**: This is not true. Precision and recall can be high or low simultaneously, depending on the model's performance.

* **F1 score is always the best metric**: This is not true. While F1 score is a useful metric, it's not always the best choice. Depending on the problem, precision or recall may be more relevant.

* **Higher F1 score always means better model performance**: This is not true. A higher F1 score can be the result of a model that's overly conservative, avoiding both false positives and false negatives at the cost of accuracy.

Conclusion

Precision, recall, and F1 score are essential evaluation metrics in machine learning and data science. By understanding what each metric measures and when to use it, you can make informed decisions about your model's performance and improve its accuracy. Remember, the choice of evaluation metric depends on the specific problem you're trying to solve, and precision, recall, and F1 score are just a few of the many metrics available to you.

(2500 × 1406px).png)

Related Post

The War Between Precision and Accuracy: Separating the Two Essential Concepts

Sean Payton's Shocking Departure: Why Did the Legendary Coach Leave the Saints?

The Rise of Angela Giarratana: Partner Extraordinaire in the World of Luxury Real Estate

Unlocking the Ultimate Seating Experience: The Power of MSG Seating Chart View From My Seat